It is 6:47 AM. Dr. Patel is already at her workstation, coffee in hand, staring at a worklist that will not get shorter for the next ten hours. Across the hall, Dr. Gupta is finishing up a nighthawk batch from the overnight ER feed. Dr. Rao will not arrive until eight, but his afternoon is already stacked — 60 studies minimum, plus callbacks from yesterday.

This is a 3-radiologist practice. No fellows. No residents. One part-time tech who handles the PACS queue and scheduling. Between the three of them, they read somewhere north of 150 studies on a typical weekday, more during flu season or when the local urgent care center sends over a weekend backlog on Monday morning.

They are good at what they do. They are also tired.

If you run a practice like this, or manage one, you have probably seen the ads for AI-powered radiology tools. And you have probably asked the same question every small practice owner asks: “Is this actually worth it for us, or is it built for the big hospital systems?”

This article is an honest attempt to answer that question with real numbers.

The math of a small radiology practice

Let us start with the baseline. A 3-radiologist outpatient or mixed practice typically handles:

- 150 to 200 studies per day across all modalities

- 50 to 70 studies per radiologist per day, with chest X-rays often making up 30 to 40 percent of volume

- Average read time of 3 to 5 minutes per study, depending on complexity

- Report turnaround expectation of under 2 hours for routine, under 30 minutes for stat reads

That means each radiologist spends roughly 4 to 6 hours of their day actively interpreting images and dictating reports. The rest goes to consultations, callbacks, peer review, and the administrative overhead that no one talks about in medical school.

Now layer in the fatigue factor. Published literature consistently shows that diagnostic accuracy degrades after 3 to 4 hours of continuous reading. A 2023 study in Radiology found that miss rates for subtle findings on chest radiographs increased by approximately 25 percent in the final two hours of a reading session compared to the first two. Your radiologists know this. They compensate with extra diligence, double-takes, and the nagging feeling that they might have missed something on study number 47.

This is the environment AI is designed to help with — not the first read of the day when everyone is sharp, but the 50th.

Where AI fits: augmentation, not replacement

Let us be direct about what AI radiology tools do and do not do in a practice like this.

AI does not replace the radiologist’s read. No responsible AI vendor should claim otherwise, and no regulatory framework supports that claim. The radiologist remains the interpreting physician. The report is theirs. The liability is theirs.

What AI does is act as a second set of eyes — a computational colleague that never gets tired, never rushes before lunch, and processes every pixel of every image with the same consistency whether it is study number 1 or study number 1,500.

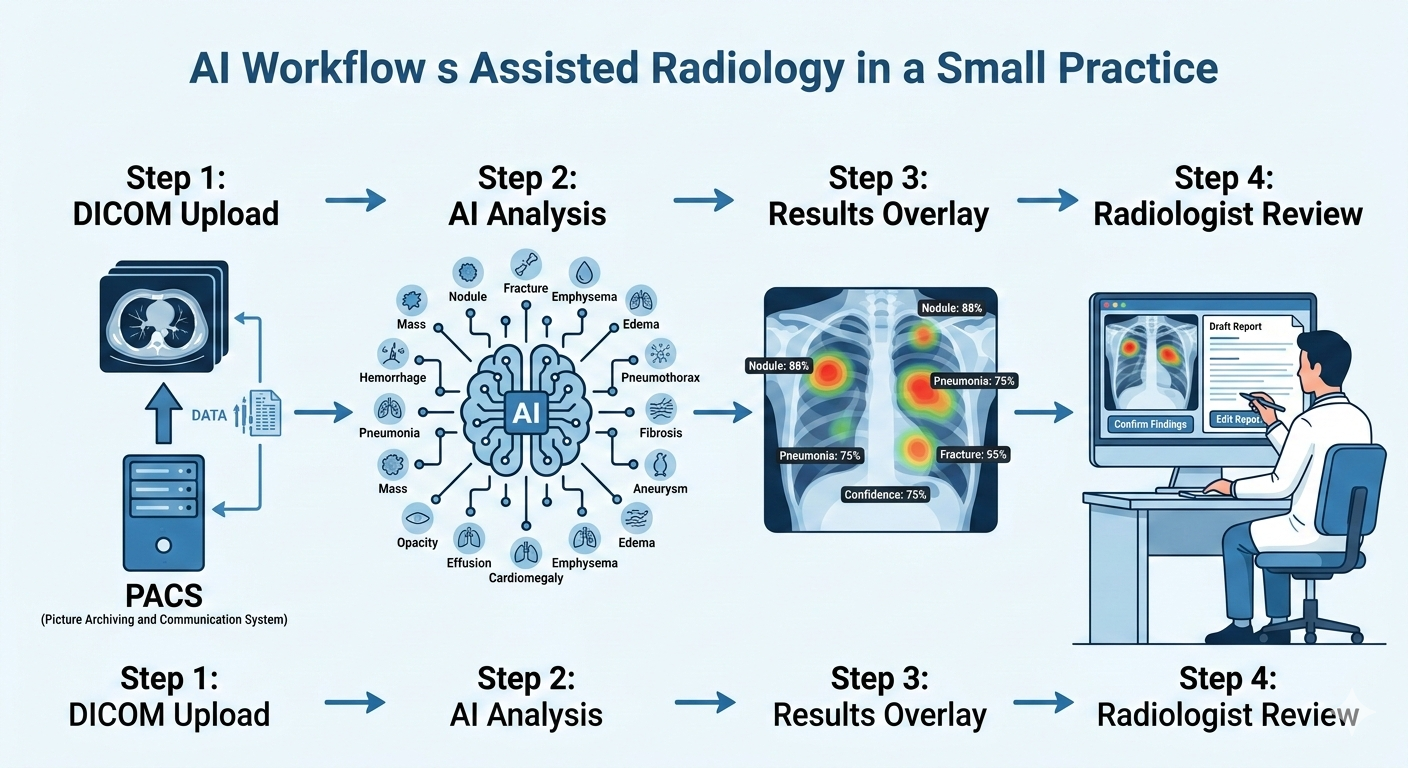

With AI Bharata’s MYAIRA Professional plan, the AI workflow integrates into your existing reading process:

- Studies are uploaded via DICOM or basic PACS integration. No workflow overhaul required.

- The AI runs its analysis across 15+ pathology categories on chest X-rays — pneumothorax, pleural effusion, cardiomegaly, consolidation, nodules, rib fractures, and more.

- Results appear as a structured overlay: heatmap localization showing exactly where the AI detected findings, confidence scores for each detection, and a priority triage flag for urgent cases.

- A draft structured report is auto-generated, which the radiologist can accept, modify, or discard entirely.

The radiologist still reads the study. But now they read it with a pre-screened summary that highlights regions of concern, flags studies that need immediate attention, and provides a structured starting point for the final report.

The time savings: where minutes become hours

Here is where the numbers start to matter for a small practice.

Triage time savings. Without AI, someone — usually the radiologist or that overworked tech — manually scans the worklist to identify urgent cases. With AI priority triage, critical findings like pneumothorax or large effusions get flagged automatically and pushed to the top. For a practice reading 150+ studies per day, this alone can save 20 to 30 minutes of daily triage overhead across the group.

Read time reduction. When the AI pre-screens a study and presents a heatmap with confidence-scored findings, the radiologist’s interpretation becomes more focused. They are not scanning the entire image from scratch; they are confirming or refuting AI-flagged regions and then doing their own systematic review. Studies on AI-assisted radiology workflows consistently show a 15 to 25 percent reduction in average read time per study. On 50 chest X-rays per radiologist per day, that translates to roughly 20 to 40 minutes saved per radiologist — or 60 to 120 minutes across the practice.

Report generation. Dictating a structured chest X-ray report takes 1 to 2 minutes. Reviewing and editing an AI-generated draft report takes 20 to 40 seconds. Across 50 to 60 CXR reads per day for the practice, that is another 30 to 50 minutes saved.

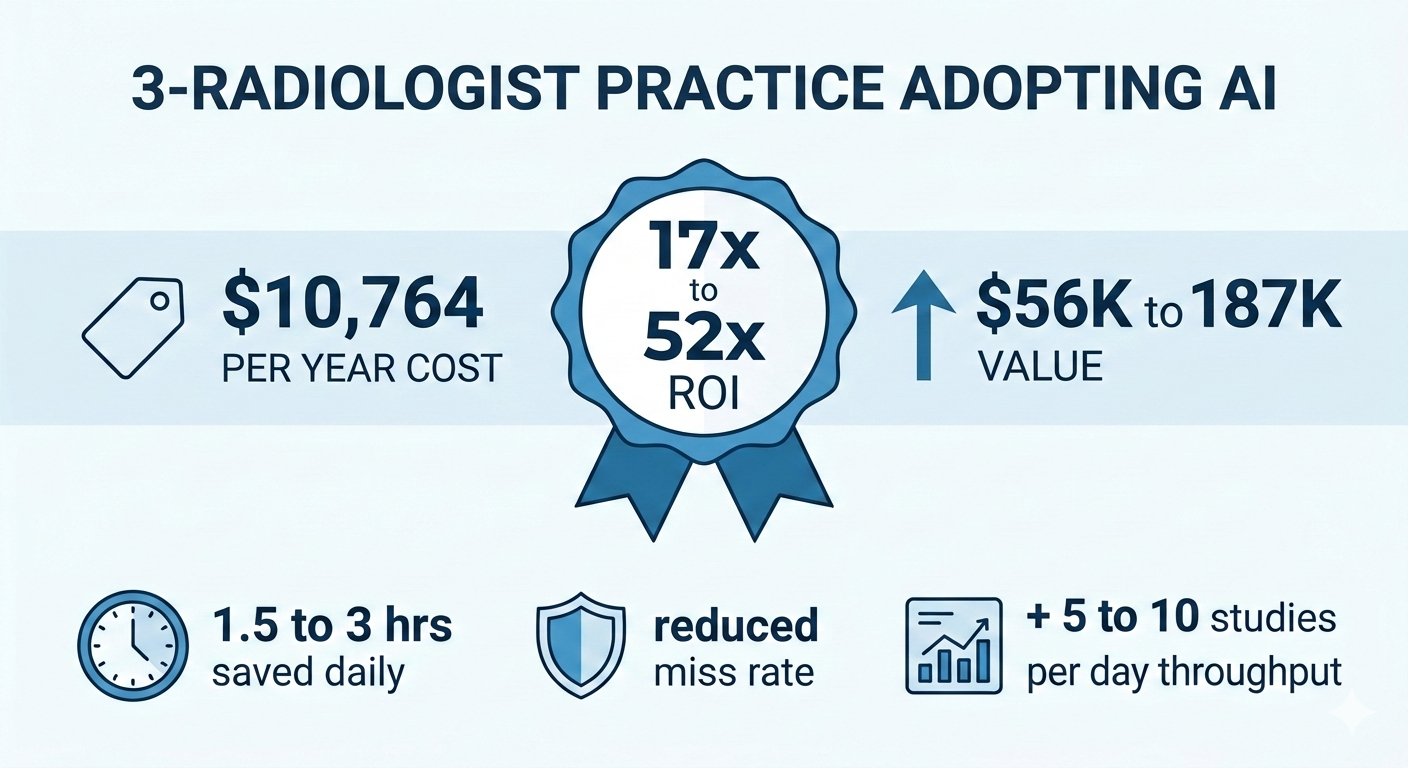

Total estimated daily time savings: 1.5 to 3 hours across a 3-radiologist practice.

That is not a small number. That is the difference between finishing at 5:30 PM and finishing at 7:00 PM. That is one fewer Saturday shift per month. That is time for the peer review meeting that keeps getting postponed.

The money math

Now the question you actually want answered.

MYAIRA Professional plan: $299 per month per seat.

For a 3-radiologist practice, that is $897 per month, or roughly $10,764 per year. Each seat includes up to 2,000 AI analyses per month, which comfortably covers a practice reading 150+ studies per day even accounting for non-CXR volume that the AI would not process.

Here is what that buys you:

Value of time saved. A radiologist’s professional time is worth, conservatively, $150 to $250 per hour depending on market and subspecialty. If the practice saves 1.5 to 3 hours per day, that is $225 to $750 in daily professional time value. Over 250 working days per year, the time value alone is $56,250 to $187,500 annually — against a cost of $10,764.

Even if you cut those estimates in half to be conservative, the ROI is substantial.

Value of reduced missed findings. This is harder to quantify but arguably more important. A single missed pneumothorax that results in a patient decompensation can lead to malpractice exposure in the range of $200,000 to $500,000 or more in settlement costs, plus the immeasurable cost of patient harm. Malpractice premiums for radiologists already run $15,000 to $40,000 per year. If AI-assisted second reads reduce your miss rate even marginally — and the literature suggests they do — the risk mitigation value alone can justify the subscription cost.

Value of throughput. If the time saved allows your practice to read even 5 to 10 additional studies per day without extending hours, at an average professional component reimbursement of $25 to $50 per study, that is an additional $31,000 to $125,000 in annual revenue.

We are not going to pretend these numbers are precise. Every practice is different. But the direction is clear: for a practice at this volume, the math works.

The quality argument

Beyond dollars and hours, there is a clinical quality case that matters to radiologists who care about their work — which is all of them.

Fatigue-related diagnostic errors are one of the most studied problems in radiology. The data is unambiguous:

- Perceptual errors account for 60 to 80 percent of diagnostic mistakes in radiology, according to research published in the American Journal of Roentgenology.

- AI second-read systems have demonstrated the ability to catch findings that human readers miss, particularly subtle nodules, small pneumothoraces, and early consolidation patterns.

- Concurrent AI feedback during the reading session has been shown to reduce false-negative rates by 10 to 30 percent in controlled studies on chest radiograph interpretation.

For a 3-radiologist practice without the safety net of a large department’s peer review infrastructure, an AI second reader provides a layer of quality assurance that is otherwise difficult to achieve. It does not catch everything. It is not infallible. But it is consistent, and it does not have a bad day.

MYAIRA’s confidence scoring adds nuance here. The AI does not just say “finding present” — it provides a calibrated confidence level, so the radiologist can quickly assess whether the AI’s detection aligns with their own impression or warrants a closer look. This is how a good colleague works: not overriding your judgment, but giving you a reason to pause and reconsider when the data warrants it.

What the 14-day trial actually looks like

If you are considering this for your practice, you probably want to know what the onboarding disruption looks like. Here is the honest answer: it is minimal.

Day 1. Sign up at medixshare.aibharata.com. No credit card required. You get access to the Professional plan dashboard with 3 seats.

Days 1 through 3. Upload studies via DICOM. If your PACS supports basic send-to functionality, you can route studies to MYAIRA in parallel with your existing workflow. The AI processes them and presents results in the MYAIRA interface. Your radiologists do not need to change how they read — they just have an additional screen or tab showing AI results alongside their normal PACS viewer.

Days 4 through 10. Your radiologists start to develop a feel for the AI’s performance. They will notice studies where the AI flagged something they might have spent extra time on. They will also notice false positives — the AI flagging a finding that turns out to be artifact or normal variant. This is normal. The goal during the trial is not perfection; it is pattern recognition. Does the AI add value to your workflow more often than it adds noise?

Days 11 through 14. You have enough data to make a decision. Look at how many studies the AI flagged, how many of those flags were clinically useful, and whether your read times or end-of-day fatigue levels shifted.

The trial runs in parallel. You do not need to restructure anything. If it does not work for your practice, you stop. No cancellation calls, no contracts.

One additional note: Medixshare, AI Bharata’s collaborative case-sharing network, is included free with the Professional plan. For a small practice without easy access to subspecialty second opinions, this can be a meaningful bonus — but that is a topic for another article.

The honest answer

We promised honesty, so here it is.

AI-powered radiology is worth it for your 3-radiologist practice if:

- You are reading 100+ studies per day and chest X-rays make up a significant portion of your volume.

- Your radiologists are working long shifts and you are concerned about fatigue-related quality degradation.

- You have experienced or are worried about missed findings and the associated liability exposure.

- You want to increase throughput without hiring a fourth radiologist, which would cost $350,000 to $500,000 per year in salary and benefits alone.

- You value structured, consistent reporting and want to reduce dictation overhead.

- Your practice is growing and you need to scale read capacity without scaling headcount proportionally.

It might not be worth it if:

- Your volume is low enough that each radiologist is reading 20 to 30 studies per day with ample time between reads. Fatigue is not a factor, and the AI’s triage value diminishes at low volume.

- Your case mix is almost entirely non-CXR modalities that the current AI does not cover. (MYAIRA’s pathology detection is focused on chest X-rays today, with additional modalities on the roadmap.)

- You are in a setting where all studies already receive dual-radiologist reads as a matter of protocol. The AI adds less incremental value when human double-reading is already standard.

- Your PACS infrastructure is highly customized and you are not willing to add a parallel workflow during evaluation.

Most 3-radiologist practices we talk to fall into the first category. But we would rather you make the decision with clear eyes than sign up and feel oversold.

Try it and decide with your own data

The best way to answer “is it worth it?” is to run it against your own worklist for two weeks.

MYAIRA’s Professional plan is $299 per month per seat, with up to 2,000 AI analyses per month per seat. The 14-day trial is free, requires no credit card, and runs alongside your existing workflow with zero disruption.

Fifteen pathology detections on chest X-ray. Heatmap localization. Confidence scoring. Priority triage. Structured auto-reports. DICOM upload with basic PACS integration. Three seats for your three radiologists.

If the numbers work for you the way they work on paper, you will know within a week. If they do not, you will know that too — and you will have lost nothing but a few minutes of setup time.