The chest X-ray was the proving ground. Over the past several years, AI systems learned to read posteroanterior and lateral films with remarkable accuracy — detecting pneumothorax, cardiomegaly, pleural effusions, consolidations, and a growing list of pathologies that now exceeds fifteen distinct conditions in production-grade systems. Results come back in under three seconds, complete with heatmap localization and confidence scores. For a technology that many clinicians once dismissed as hype, chest X-ray AI has quietly become real.

But here is the thing most people outside radiology do not fully appreciate: chest X-rays represent a narrow slice of diagnostic imaging. They account for roughly 40% of all imaging studies by volume, which is precisely why AI started there. The remaining 60% — CT, MRI, mammography, ultrasound, pathology, and specialized modalities — is where the next decade of medical imaging AI will be built. And the technical, clinical, and operational challenges of that expansion are fundamentally different from what it took to get chest X-ray AI right.

This is a look at where multi-modality AI is headed, what is genuinely close, what remains hard, and what it will take to make it work in real clinical environments.

Why Chest X-Rays Came First

It was not an accident that chest X-ray AI matured before other modalities. Several factors aligned to make it the ideal starting point.

Volume and standardization. Chest X-rays are the single most commonly ordered imaging study worldwide. The positioning is standardized — PA and lateral views follow well-established protocols. This consistency means training datasets are relatively uniform, which matters enormously when you are teaching a neural network to detect subtle abnormalities.

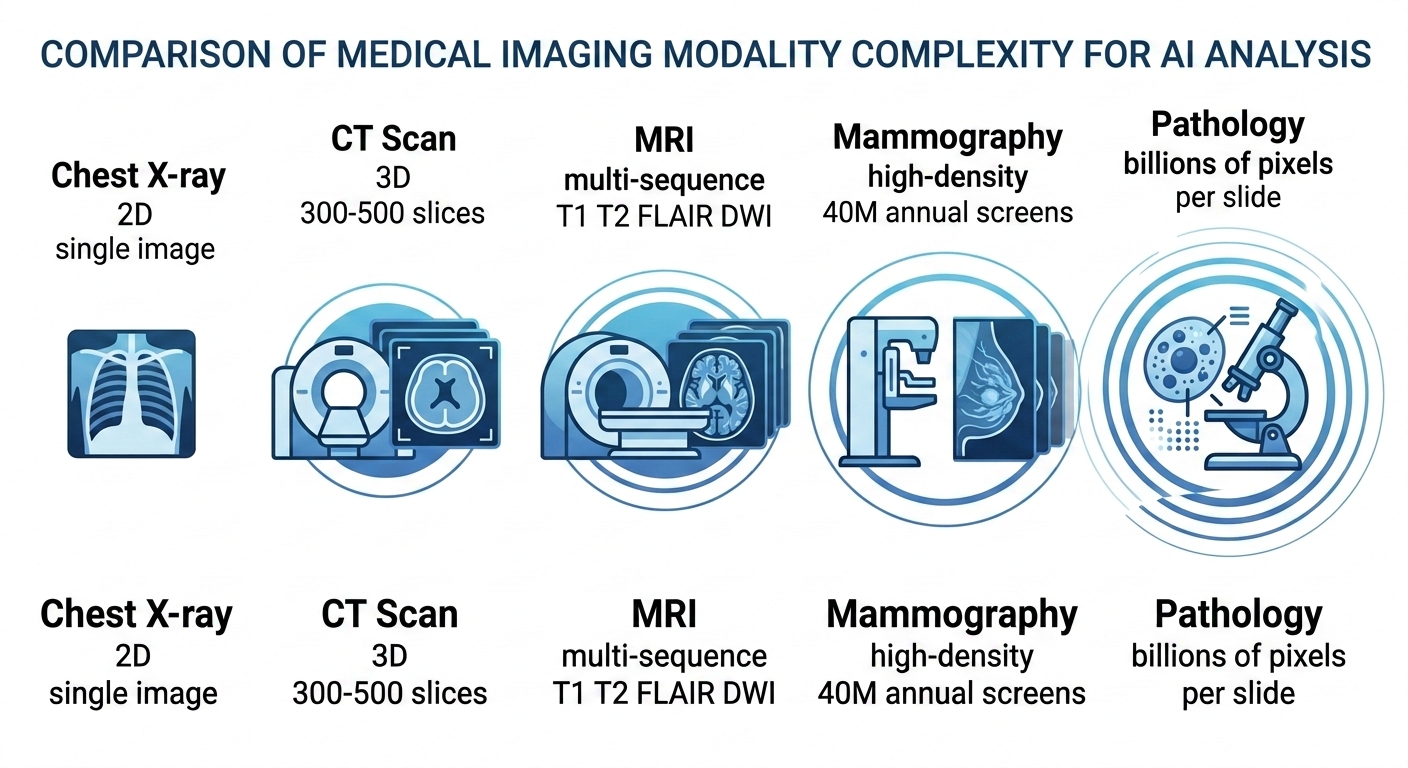

Two-dimensional images. A chest X-ray is a single 2D projection. Compared to a CT scan with hundreds of slices or an MRI with multiple sequences, the computational and algorithmic complexity is orders of magnitude lower. Early convolutional neural networks were well-suited to this kind of input.

Clear clinical need. Emergency departments, ICUs, and primary care clinics generate enormous volumes of chest films that often wait hours for a radiologist read. The use case — triage, prioritization, preliminary screening — was immediate and obvious.

Public datasets. Large labeled datasets like CheXpert, MIMIC-CXR, and NIH ChestX-ray14 gave researchers a shared foundation. No other imaging modality has benefited from the same scale of publicly available, annotated data.

All of this made chest X-ray AI the natural first chapter. But the next chapters look very different.

CT Scan AI: The Next Frontier

Computed tomography is where multi-modality AI will likely make its most significant near-term impact. CT scans are high-volume, clinically critical, and increasingly standardized — but they introduce complexity that chest X-ray AI never had to handle.

A single chest CT can contain 300 to 500 axial slices. A full-body trauma scan might have over a thousand. The AI system is no longer classifying a single 2D image; it is analyzing a volumetric dataset, identifying structures in three dimensions, and detecting abnormalities that may span only two or three slices.

Lung nodule detection and characterization is the most mature CT AI application. Systems can now identify sub-centimeter nodules, estimate their volume, and track growth over serial studies. For lung cancer screening programs, where radiologists review hundreds of low-dose CTs per week, AI-assisted detection reduces miss rates and standardizes the measurement of indeterminate nodules. The Lung-RADS classification framework provides a natural structure for AI output.

Stroke detection from CT angiography is another area with strong clinical momentum. Large vessel occlusion detection — identifying the clot causing an acute ischemic stroke — is time-critical in a way that few other imaging findings are. Every minute of delay in treatment translates to measurable brain tissue loss. AI systems that flag LVO cases and push them to the top of the reading queue have demonstrated real improvements in door-to-treatment times.

Abdominal CT presents a broader challenge. Liver lesion characterization, pancreatic mass detection, renal stone measurement, bowel obstruction identification — the list of potential applications is long, and each requires specialized training data and validation. Progress here is steady but fragmented, with different vendors focusing on different anatomical targets.

The throughput demands are significant. Analyzing a 500-slice CT dataset requires substantially more compute than a single chest film. Systems that deliver results in under three seconds for a chest X-ray may need different architectural approaches to maintain clinically acceptable turnaround times on volumetric data.

MRI AI: The Complexity Challenge

Magnetic resonance imaging is, by a considerable margin, the most technically challenging modality for AI. The reasons are both fundamental and practical.

A single MRI study is not one image or even one stack of images. It is a collection of sequences — T1-weighted, T2-weighted, FLAIR, diffusion-weighted, contrast-enhanced — each highlighting different tissue characteristics. An AI system analyzing a brain MRI needs to understand how the same lesion appears across multiple sequences and synthesize that information into a coherent assessment. This multi-sequence reasoning is a step beyond what current single-input architectures handle well.

Brain MRI is the most active area of MRI AI research. Volumetric analysis for neurodegeneration — measuring hippocampal volume for Alzheimer’s assessment, tracking white matter lesion burden in multiple sclerosis — has reached clinical use. These are largely quantitative measurement tasks, which play to AI’s strengths. Tumor segmentation and characterization are progressing but remain primarily in research settings.

Cardiac MRI analysis, particularly automated ejection fraction calculation and myocardial tissue characterization, is technically feasible but has been slower to reach clinical deployment. The variability in acquisition protocols across institutions creates a training data challenge that chest X-ray AI never faced.

Musculoskeletal MRI — detecting meniscal tears, ACL injuries, rotator cuff pathology — is an area where AI has shown strong performance in controlled studies. The clinical deployment challenge is less about accuracy and more about integration: these studies are often read by subspecialists who already have high accuracy, and the workflow integration needs to add value without adding friction.

MRI AI will mature, but it will likely do so modality by modality and sequence by sequence, rather than in a single leap.

Mammography AI: The Highest-Impact Opportunity

If you ask most radiology AI researchers which modality has the greatest potential for population-level health impact, mammography is often the answer.

The numbers tell the story. In the United States alone, approximately 40 million screening mammograms are performed annually. Each study is read by a radiologist who must detect subtle calcifications and masses against a background of dense, overlapping breast tissue. The work is visually demanding, repetitive, and high-stakes — a missed cancer can mean the difference between early-stage treatment and late-stage diagnosis.

Radiologist fatigue is not a theoretical concern. Studies have shown that diagnostic accuracy for mammography decreases over the course of long reading sessions. The false-negative rate for screening mammography ranges from 10% to 20%, depending on breast density and reader experience.

AI systems for mammography have shown performance comparable to or exceeding that of individual radiologists in multiple large-scale studies, including the landmark OPTIMAM and Swedish screening trials. The most promising deployment model is not replacement but augmentation — AI as a second reader that flags studies for additional attention, or as a triage tool that sorts studies into high-confidence normal and needs-human-review categories.

The transition from 2D full-field digital mammography to 3D digital breast tomosynthesis (DBT) adds both opportunity and complexity. DBT generates substantially more image data per study, increasing reading time for radiologists and making AI assistance even more valuable. But it also requires AI systems trained specifically on tomosynthesis data, which is less widely available than 2D mammography datasets.

Mammography AI may ultimately become the modality where AI has the most measurable impact on patient outcomes at scale.

Pathology and Beyond

Digital pathology represents a fundamentally different AI challenge. A single whole-slide image from a surgical pathology specimen can contain billions of pixels at full resolution. The abnormalities of interest — atypical cells, mitotic figures, architectural distortion — exist at a scale that requires both high-magnification detail analysis and low-magnification pattern recognition.

AI applications in pathology are advancing on multiple fronts. Automated Gleason grading for prostate cancer has shown strong concordance with expert pathologists. Metastatic breast cancer detection in sentinel lymph nodes — the focus of the CAMELYON challenge — demonstrated that AI could match pathologist performance on specific, well-defined tasks. Ki-67 proliferation index quantification, which is tedious and subjective when done manually, is a natural fit for automated analysis.

Beyond traditional radiology and pathology, AI is extending into other visual diagnostic domains:

Dermatology — AI classification of skin lesions from clinical photographs has shown performance comparable to dermatologists for melanoma detection. The accessibility of the input (a smartphone photograph) makes this a compelling telemedicine application.

Ophthalmology — Retinal image analysis for diabetic retinopathy screening is one of the earliest FDA-cleared autonomous AI diagnostic applications. Fundus photography AI is being deployed in primary care settings, enabling screening without an ophthalmologist present.

Point-of-care ultrasound — AI-guided ultrasound, where the system provides real-time feedback on probe positioning and image quality, is an emerging application that could extend ultrasound capability to non-specialist operators.

The Integration Challenge

Building an AI model that performs well on a test dataset is one problem. Making it work within the daily reality of a radiology department is an entirely different one.

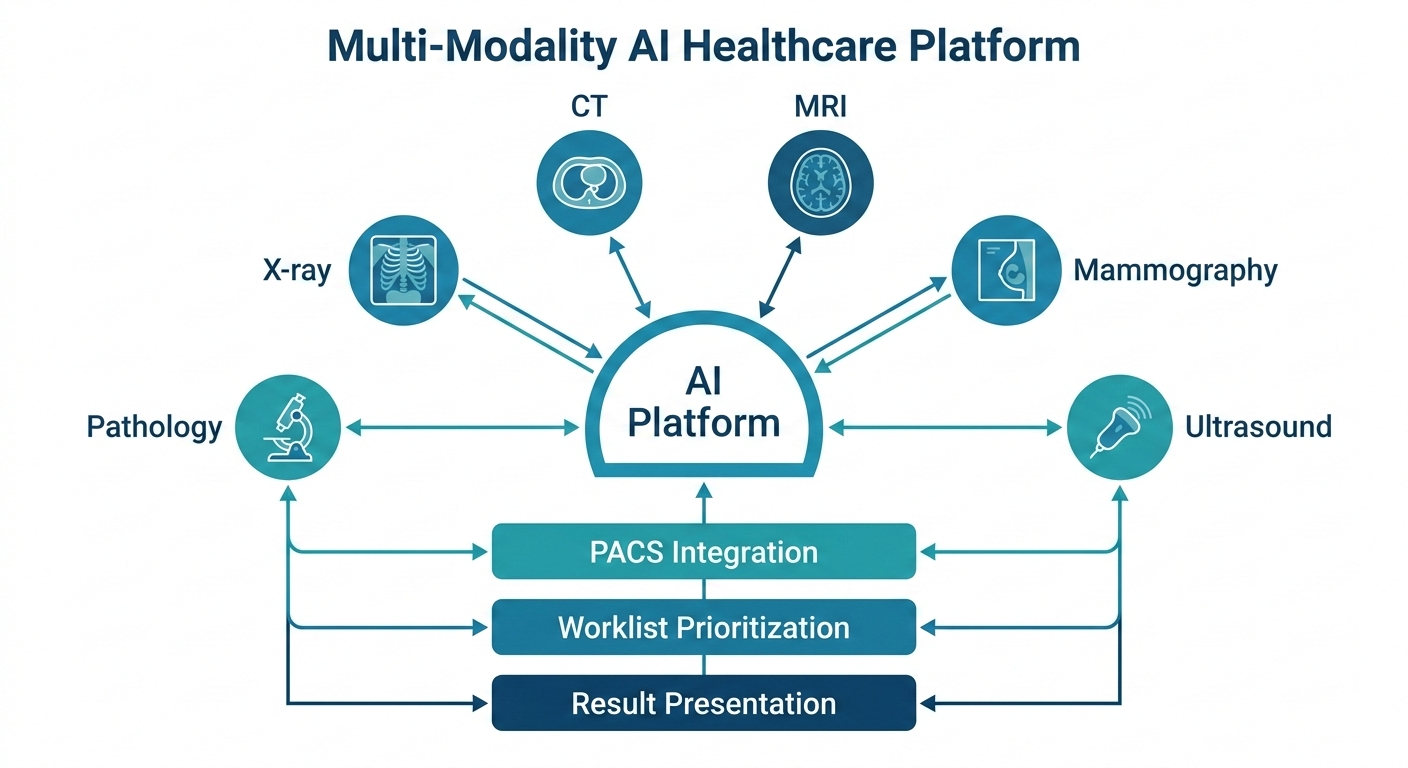

Multi-modality AI amplifies every integration challenge that single-modality systems face. Consider what a hospital needs to deploy AI across chest X-ray, CT, and mammography simultaneously:

PACS integration. The AI system must receive studies from the picture archiving and communication system, process them, and return results — structured reports, annotated images, heatmaps — back into the radiologist’s reading workflow. This needs to work across different PACS vendors with different DICOM implementations.

Worklist prioritization. If the AI flags a critical finding on a CT scan, that information needs to surface on the radiologist’s worklist immediately, not sit in a separate dashboard that nobody checks during a busy shift.

Result presentation. A chest X-ray AI result and a mammography AI result serve different clinical contexts and need different presentation formats. One-size-fits-all interfaces do not work.

Multi-site consistency. A health system with ten hospitals running three different CT scanner models needs AI that performs consistently across all of them. Scanner-specific bias in AI output is a known and non-trivial problem.

Regulatory compliance. Each modality-specific AI application may require separate regulatory clearance. Managing a portfolio of AI tools across modalities creates compliance overhead that single-modality deployments do not face.

The platforms that win in multi-modality AI will not necessarily be the ones with the best individual models. They will be the ones that solve the orchestration problem — routing the right study to the right model, presenting results in the right context, and doing it all without adding clicks to an already overloaded workflow.

What This Means for MYAIRA

At AI Bharata, we built MYAIRA’s AI capabilities starting where the evidence was strongest: chest X-ray analysis. Today, our system analyzes chest radiographs for over fifteen pathologies in under three seconds, returning structured findings with heatmap localization and confidence scores. It is production-ready, clinically validated, and integrated into real workflows.

But we have always understood that chest X-ray is the starting point, not the destination.

Our roadmap extends AI analysis to CT, MRI, mammography, and ultrasound as these capabilities reach the validation thresholds we require for clinical deployment. We are not interested in shipping demo-quality models. Each modality will meet the same standard of accuracy, speed, and clinical utility that our chest X-ray AI delivers today.

Importantly, the infrastructure is already in place. Medixshare, our imaging sharing platform, handles all major imaging modalities today — X-ray, CT, MRI, ultrasound, mammography, and pathology slides. The sharing, viewing, and collaboration layer is modality-agnostic by design. As AI models for new modalities reach production readiness, the delivery infrastructure does not need to be rebuilt.

For enterprise customers, our roadmap includes multi-modality AI access as capabilities become available, along with custom model training for institution-specific needs. This is not about offering a single monolithic AI that claims to do everything. It is about a platform that grows with the science, adding validated capabilities modality by modality. That is AI Bharata’s commitment to clinicians and the patients they serve.

The future of imaging AI is not a single breakthrough. It is the steady, rigorous expansion of automated analysis across every modality, every anatomy, and every clinical context where it can genuinely help clinicians deliver better care. That is the work we are committed to.

See what MYAIRA AI can do today — and where we are headed. Explore MYAIRA Features to learn about our chest X-ray AI, Medixshare imaging platform, and the multi-modality roadmap that is shaping the future of clinical imaging.