Your doctor tells you they’re sending your chest X-ray for analysis — and mentions that an AI system will also review it. You nod, but a dozen questions flash through your mind. Is a computer deciding what’s wrong with me? Can it actually understand a medical image? Should I be reassured or worried?

You’re not alone in wondering. As AI makes its way into clinics and hospitals, patients are hearing the phrase “AI-assisted diagnosis” more often — usually with very little explanation of what it actually means. This guide is here to change that. No jargon, no hype, just a clear and honest look at what happens when artificial intelligence looks at your medical scan.

What AI sees when it looks at your scan

Let’s start with the basics. When we say an AI system “reads” your chest X-ray, we don’t mean it understands medicine the way your doctor does. It doesn’t think, reason, or have intuition. What it does is something much more specific: it recognizes visual patterns.

Here’s a rough analogy. Imagine you showed someone thousands of photographs of cloudy skies and told them which ones produced rain. Over time, they’d notice patterns — certain cloud formations, certain shades of gray — that correlate with rain. They wouldn’t understand atmospheric physics, but they’d get good at predicting rain from a photo.

AI works similarly. A system like MYAIRA AI, developed by AI Bharata, has been trained on large datasets of chest X-rays reviewed and labeled by radiologists. Through that training, the AI learned to recognize the visual signatures of more than 15 different conditions — pneumonia, an enlarged heart (cardiomegaly), fluid around the lungs (pleural effusion), a collapsed lung (pneumothorax), atelectasis, consolidation, masses, nodules, and more.

When your X-ray arrives, the AI scans the entire image and checks for each of these patterns. It does this in under three seconds. Not because it’s rushing, but because pattern matching across pixels is something computers are exceptionally fast at.

But here’s the important part: recognizing a pattern is not the same as making a diagnosis. That distinction matters, and it’s at the heart of what “AI-assisted” actually means.

AI as a second pair of eyes, not a replacement doctor

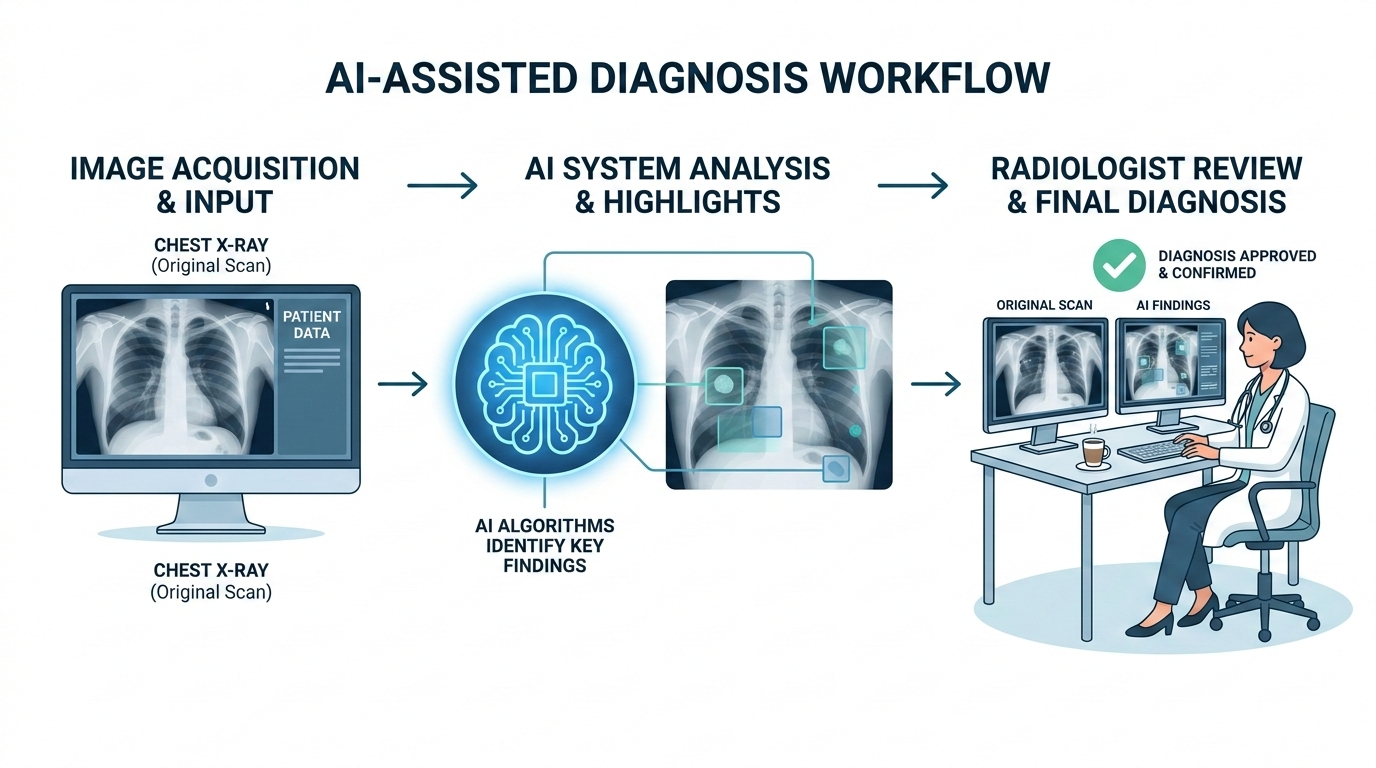

The word “assisted” in “AI-assisted diagnosis” is doing real work. It means the AI is there to help your doctor — not to replace them.

In radiology, there’s a well-established practice called “double reading,” where two radiologists independently review the same scan. Two readers catch more than one — the second picks up findings the first might have missed. But double reading is expensive and time-consuming. Many hospitals simply don’t have enough radiologists to do it routinely. (The shortage of radiologists is a real and growing issue — we’ve written about that separately.)

This is where AI fits in. Think of it as a tireless second reader. It never gets fatigued at 2 a.m. It doesn’t have a backlog of 80 scans pressing on its attention. It looks at every image with the same level of focus, every single time.

But — and this is a big but — the AI’s findings always go to a human radiologist. Your doctor reviews the AI’s output alongside the image itself, applies their clinical judgment, considers your medical history, asks questions the AI can’t ask, and arrives at a diagnosis. The AI flags; the doctor decides.

Think of a spell-checker: it catches errors you might have missed, but you decide whether to accept its suggestions. Sometimes it flags a correct word; sometimes it misses a mistake only a human would catch from context. AI in radiology works much the same way — useful, but not the final word.

How confidence scores work

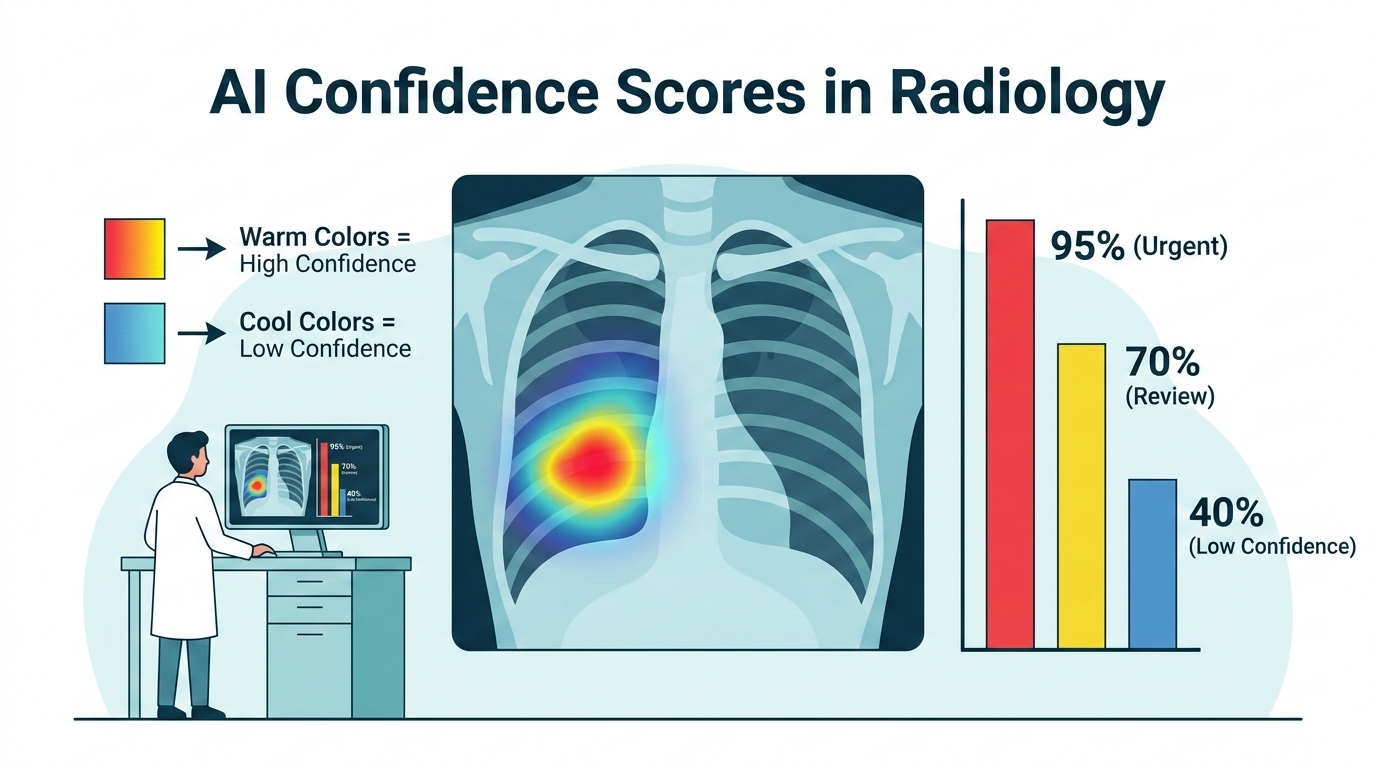

One thing that might come up in your results, or that your doctor might mention, is a “confidence score.” This is a number — usually expressed as a percentage — that represents how certain the AI is about a particular finding.

For example, the AI might report: “87% probability of pneumonia in the right lower lobe.”

What does that mean? It does not mean you have an 87% chance of having pneumonia. That’s a common and understandable misreading, but it’s not quite right.

What it means is closer to this: based on the visual patterns in your X-ray, the AI’s mathematical model rates the similarity to pneumonia patterns at 87 out of 100. It’s a measure of the AI’s confidence in its own pattern match, not a direct statement about your health.

Think of it like this. If you showed someone a photo and asked, “How sure are you that’s a golden retriever?” they might say, “About 90% sure.” That tells you something useful, but the percentage reflects their certainty, not a fact about the dog.

In practice, confidence scores help radiologists prioritize. A 95% confidence score demands careful review. A finding at 40% might be noise or something subtle worth a closer look. The radiologist uses the score as one input among many — not as a verdict.

MYAIRA AI also uses these scores for something called priority triage flagging. If the AI detects a high-confidence finding for a serious condition — say, a pneumothorax — it can flag that scan for urgent review. In a busy hospital where dozens of scans are waiting in queue, this kind of prioritization can genuinely matter. The scan that needs attention in the next few minutes gets seen in the next few minutes, instead of waiting its turn.

The heatmap: AI shows its work, literally

One of the most interesting things about modern radiology AI is that it doesn’t just give you a yes-or-no answer. It can show you where on the image it’s looking.

MYAIRA AI generates what’s called a heatmap — a color overlay on your X-ray that highlights the regions the AI focused on when making its assessment. Areas in warmer colors (reds and yellows) are regions the AI weighted most heavily. Cooler colors (blues and greens) are regions that contributed less to the finding.

The technical name for this is GradCAM (Gradient-weighted Class Activation Mapping), but you don’t need to remember that. What matters is the concept: the AI is showing its work.

Why does this matter? It gives the radiologist a way to check the AI’s work. If the AI says “probable pneumonia in the right lower lobe” and the heatmap highlights exactly that area, it’s consistent. If the heatmap highlights the shoulder instead of the lung, the radiologist knows something is off and can disregard the finding.

For you as a patient, the heatmap is a form of transparency. The AI isn’t a black box. It’s pointing to specific regions on your scan and saying, “This is what caught my attention.” Your doctor can walk you through it: “See this area here? The AI flagged it, and I agree — there’s a shadow consistent with fluid buildup.”

The heatmap shows what the AI focused on, not a step-by-step explanation of its reasoning. But it’s a meaningful step toward making AI in medicine understandable and accountable.

What AI can and cannot do today

Honesty about limitations is important. Here’s a straightforward look at where radiology AI stands right now.

What AI does well:

- Pattern detection at scale. AI is very good at scanning an image quickly and flagging areas that match known pathology patterns. It’s consistent and fast.

- Reducing missed findings. Studies have shown that AI as a second reader can catch findings that a single human reader might overlook, particularly in high-volume settings where fatigue is a factor.

- Triage and prioritization. AI can help ensure that the most urgent scans get seen first.

- Standardization. AI applies the same criteria to every scan. It doesn’t have off days.

What AI cannot do:

- Make a clinical diagnosis. A diagnosis involves your symptoms, history, lab results, medications, and many other factors the AI has no access to. Only your doctor can synthesize all of that.

- Understand context. AI doesn’t know you had surgery last month, or that the shadow on your X-ray is a known and stable finding from years ago. Context is a human skill.

- Handle rare or unusual cases reliably. AI is strongest with conditions it has seen many examples of in training. Rare presentations or unusual anatomy can trip it up.

- Explain why. AI can show where it’s looking (the heatmap), but it can’t articulate medical reasoning. It can only say, in effect, “This area looks like pneumonia to me.”

- Replace the doctor-patient relationship. Medicine involves communication, empathy, shared decision-making, and trust. AI has no role in that, and it shouldn’t.

These limitations are not failures — they’re the natural boundaries of what this technology is designed to do. AI-assisted diagnosis is a tool. A powerful one, but a tool nonetheless.

What this means for you as a patient

So, your doctor mentions AI is part of your care. Here’s what you can take away from all of this:

Your scan gets an extra layer of review. AI adds a second reading to your image. That’s generally a good thing. More eyes — even digital ones — mean a lower chance that something gets missed.

Your doctor is still in charge. The AI doesn’t make decisions about your care. It provides information that your radiologist and your physician use alongside everything else they know about you. The human is always the final decision-maker.

You can ask questions. If your doctor mentions an AI finding, you have every right to ask: What did the AI flag? Do you agree with it? What does the heatmap show? Doctors who use AI tools should be able to explain what the AI found and why they agree or disagree with it.

Speed can matter. In urgent situations, the AI’s ability to triage scans quickly — flagging a potential pneumothorax within seconds, for example — can help ensure you get timely care.

AI is not infallible. It can produce false positives (flagging something that isn’t actually there) and false negatives (missing something that is). This is true of human readers too, which is exactly why the combination of human and AI tends to perform better than either one alone.

Your data matters. Any AI system analyzing your medical images should be operating under the same privacy and security standards as the rest of your medical care. This is non-negotiable.

The arrival of AI in radiology isn’t something to fear or to blindly celebrate. It’s a practical development that, when implemented well, adds a meaningful layer of safety and efficiency to the diagnostic process. Companies like AI Bharata are building these tools with a clear principle: the AI assists, the doctor decides. Your doctor still knows you. The AI just helps them see a little more clearly.

Want to understand more about how MYAIRA AI works as a second reader for chest X-ray analysis? Visit our features page for a closer look at the technology behind the tool.