AI and Radiologist Burnout: Why the Tool Meant to Help Might Be Making Things Worse

The pitch is simple and compelling: radiologists are overwhelmed, so give them AI to share the load. Faster reads. Fewer misses. Less stress.

It sounds right. The logic is clean. And for a lot of people selling radiology AI, it’s the entire value proposition.

There’s just one problem. The data says the opposite might be happening.

A study published in JAMA Network Open examined the relationship between AI tool usage and burnout among practicing radiologists. The finding was counterintuitive and uncomfortable: radiologists who used AI tools reported higher odds of burnout than those who didn’t. And the relationship was dose-dependent — the more AI tools in the workflow, the higher the burnout risk.

More AI. More burnout. Not less.

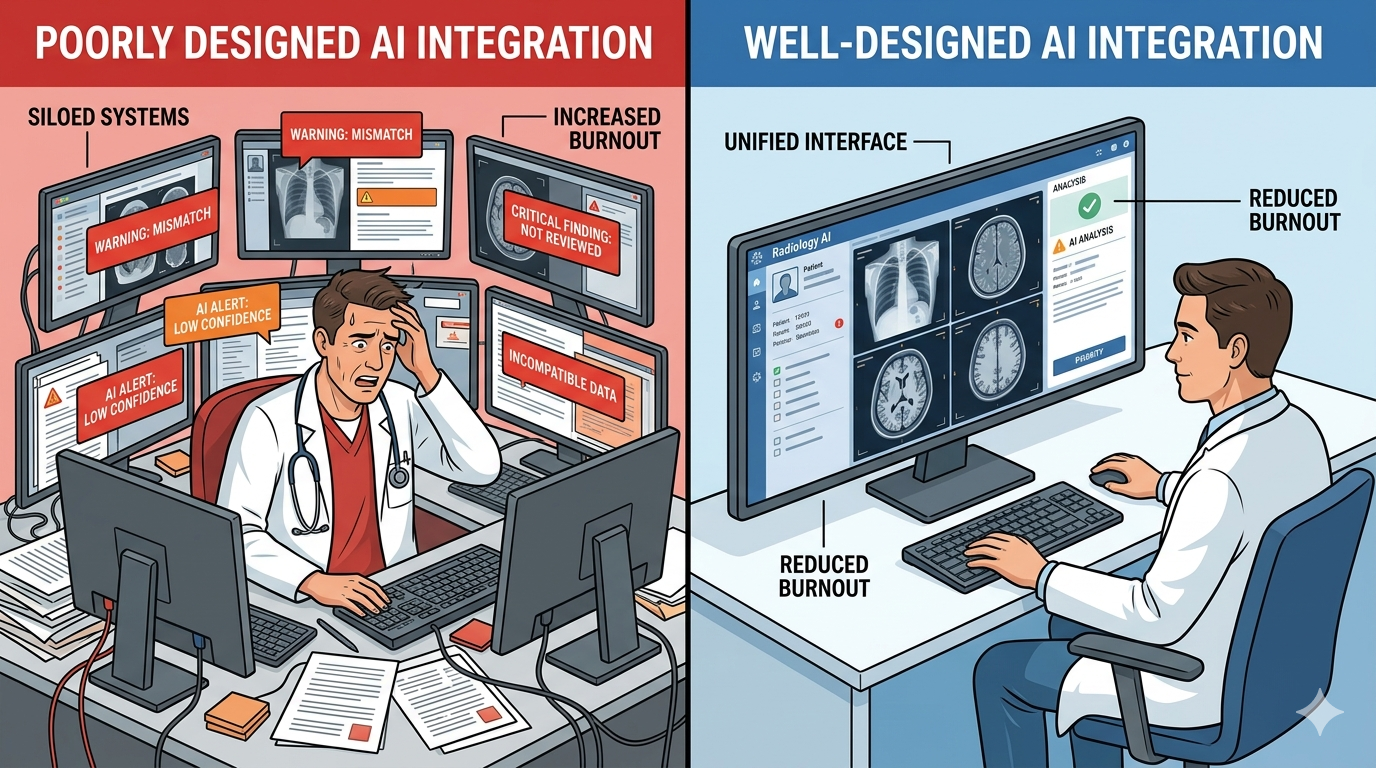

This isn’t an argument against AI in radiology. It’s an argument against bad AI in radiology. And understanding the difference matters for every radiologist, department head, and administrator deciding what tools to bring into their reading rooms.

The burnout crisis is real — and AI was supposed to fix it

We’ve written before about the radiologist shortage crisis: 19,500 unfilled positions projected by 2034, 3-5% annual growth in imaging volume, and a training pipeline that can’t keep up. Burnout is a predictable consequence of that imbalance.

The 2024 ACR survey found that over 50% of practicing radiologists report symptoms of burnout — emotional exhaustion, depersonalization, and diminished professional satisfaction. Emergency radiology and breast imaging subspecialties report even higher rates. Burnout drives early retirement, reduced hours, and exits from clinical practice, which worsens the shortage in a vicious cycle.

Against that backdrop, AI appeared as the obvious solution. If radiologists are drowning in volume, give them a tool that handles the screening layer. Let AI flag the urgent findings, triage the worklist, pre-populate reports. The radiologist focuses on what requires human judgment. Volume managed. Stress reduced. Problem solved.

That was the theory.

What the research actually found

The JAMA Network Open study surveyed radiologists across multiple practice settings — academic, private, hospital-employed, and teleradiology — assessing their use of AI-enabled clinical tools and measuring burnout using validated instruments.

The key findings challenge the industry narrative:

Dose-response relationship. Radiologists using three or more AI tools in their daily workflow had significantly higher odds of burnout compared to those using none. This wasn’t a binary effect. It scaled: more tools, more burnout. The pattern held after controlling for practice setting, subspecialty, case volume, and career stage.

Alert fatigue emerged as a primary mechanism. Radiologists reported that AI-generated alerts, flags, and notifications created a new layer of cognitive work. Every alert demands evaluation: Is this finding real? Is it clinically significant? Does it change my report? When the AI flags something I already identified, should I document that I saw it independently? When it flags something I disagree with, do I need to justify my disagreement?

Workflow disruption was pervasive. Many AI tools operate as separate systems — separate interfaces, separate logins, separate windows. The radiologist reads the study in the PACS viewer, then checks the AI output in a different application. This context-switching creates friction that accumulates across hundreds of cases per day.

Documentation burden increased. With AI findings on the record, radiologists felt pressure to address every flagged finding in their reports — even false positives. The fear of malpractice liability when AI has flagged something that the radiologist did not act on creates a defensive documentation practice that adds time without adding diagnostic value.

These aren’t edge cases. They describe the daily reality of radiologists working with AI tools that were designed to demonstrate technical capability rather than reduce cognitive burden.

Why bad AI makes burnout worse

The paradox dissolves when you look at how most radiology AI is actually implemented. The problem isn’t artificial intelligence. The problem is artificial friction.

Alert fatigue: the notification avalanche

Alert fatigue is well-documented in medicine. EHR systems taught us this lesson years ago: when every lab value, medication interaction, and vital sign deviation triggers a pop-up, clinicians learn to dismiss them all. The boy who cried wolf, at clinical scale.

Radiology AI is repeating this mistake. A typical AI tool might flag 15-30 findings per chest X-ray — varying confidence levels, varying clinical significance, some real, some artifact. The radiologist didn’t ask for this analysis. It arrives uninvited, in a sidebar or overlay, demanding attention.

When the AI correctly identifies a large pleural effusion that the radiologist spotted in the first two seconds of viewing the image, the alert adds no diagnostic value but still demands acknowledgment. When it flags a calcified granuloma as a “possible nodule” at 67% confidence, it creates work: the radiologist must evaluate the finding, decide it’s a false positive, and potentially document why they’re not acting on it.

Multiply this across 60, 80, 100 cases per day. The cognitive load is substantial.

The integration problem

Most radiology AI products are built as standalone applications. They receive DICOM images, run inference, and produce results in their own interface. This means the radiologist must:

- Open the study in the PACS viewer

- Switch to the AI application (often a separate browser window or workstation)

- Match the AI findings to the study

- Switch back to the PACS viewer

- Dictate the report in the RIS/reporting system

Three systems. Multiple context switches. Per case. All day.

This isn’t integration. It’s parallel processing that the human brain handles poorly. Cognitive science is clear on this: task-switching imposes a measurable cost in time and accuracy. For a radiologist reading hundreds of studies, the cumulative effect is exhaustion — not from the medicine, but from the interface.

The liability trap

As we explored in our post on AI malpractice in radiology, the legal landscape around AI-generated findings is evolving rapidly. The practical effect is that AI alerts create a documentation obligation: if the AI flagged it and you didn’t address it, that becomes a discoverable fact in a malpractice claim.

This transforms every false positive from a minor annoyance into a medicolegal risk. Radiologists respond rationally by documenting more, hedging more, and adding qualifiers to their reports. Report length increases. Reading time increases. And the burnout-inducing pressure of the worklist gets worse, not better.

What good AI integration looks like

The research doesn’t say AI is bad for radiologists. It says the current implementation paradigm is bad for radiologists. The distinction matters because it points toward a solution.

Good AI reduces cognitive load. Bad AI redistributes it. Here’s what the difference looks like in practice:

Minimal friction, maximal signal

An AI tool should produce results that fit into the existing reading workflow without requiring the radiologist to leave their primary workspace. The output should appear where the radiologist already looks — inside the PACS viewer, alongside the image — not in a separate application that requires a context switch.

The findings should be filtered for clinical significance, not dumped raw. A sub-3-second analysis that surfaces the three most clinically relevant findings at high confidence is more useful than a comprehensive dump of 25 findings at varying confidence levels. The radiologist needs a second opinion, not a second worklist.

Intelligent alert thresholds

Not every finding needs an alert. A well-designed AI system should distinguish between urgent, actionable findings (large pneumothorax, tension pneumothorax, misplaced lines) that warrant interruption, and incidental or low-confidence findings that should be available on request but not pushed.

The radiologist should control what surfaces and what stays quiet. Customizable alert thresholds — by pathology, by confidence level, by clinical context — put the radiologist in command of the AI, rather than the other way around.

Zero additional logins

If an AI tool requires a separate login, a separate window, or a separate workflow step, it will be abandoned or resented. The best AI integration is invisible: the radiologist opens the study and the AI findings are already there, seamlessly embedded in the viewer, as natural as the image itself.

Reduce documentation burden, don’t increase it

AI should pre-populate findings into the report structure, not create additional documentation requirements. If the AI identifies a finding and the radiologist agrees, that should flow into the report without extra work. If the radiologist disagrees, the override should be a single click, not a paragraph of justification.

Sharing matters too

Burnout in radiology isn’t only about reading cases. A significant portion of radiologist time is consumed by non-interpretive work: following up on findings, coordinating with referring physicians, managing the logistics of getting the right image to the right doctor at the right time.

When sharing a scan with a specialist requires burning a CD, uploading to a proprietary portal, or navigating a PACS-to-PACS transfer that takes days, the radiologist often becomes the bottleneck. They’re fielding calls from referring physicians asking, “Did you send the images?” They’re troubleshooting failed transfers. They’re doing IT work that has nothing to do with their training.

Medixshare addresses this directly. One-click sharing — a secure, encrypted link via SMS, email, or WhatsApp — eliminates the logistics that pile onto the radiologist’s non-reading workload. The specialist gets diagnostic-quality images instantly. The radiologist doesn’t get the follow-up call. The friction disappears.

This isn’t peripheral to the burnout conversation. Every minute a radiologist spends on image logistics is a minute they’re not reading cases, not resting, not doing the work they trained thirteen years to do. Removing sharing friction is a wellness intervention, whether or not it’s labeled as one.

The design philosophy that protects radiologists

MYAIRA was built by AI Bharata around a principle that the JAMA Network Open findings validate: AI should reduce cognitive load, full stop.

- 3-second analysis, inline results. No separate window. No context switch. Findings appear alongside the image in under three seconds, embedded in the reading workflow.

- Confidence-scored, clinically filtered output. High-confidence findings surface prominently. Low-confidence incidentals are available on demand but don’t clutter the primary view. The radiologist decides what deserves attention.

- Human-in-the-loop by design. The AI flags. The radiologist diagnoses. Always. The system is built as a second reader, not a replacement — and the interface reflects that hierarchy.

- One-click sharing via Medixshare. When findings need to reach a referring physician or specialist, sharing takes seconds. No CD burning. No portal uploads. No follow-up calls asking where the images are.

- Free tier for small practices. 50 analyses per month at no cost. The practices that need burnout relief the most — small groups, rural centers, solo radiologists — shouldn’t be priced out of the solution.

The goal is not to add another tool to the radiologist’s already-overloaded workspace. The goal is to make the existing workspace lighter.

The AI industry needs to hear this

The radiology AI market is projected to exceed $3 billion by 2028. Hundreds of FDA-cleared algorithms are available. The technology is real and improving. But the burnout data is a warning: technical capability without workflow empathy is not just unhelpful — it’s harmful.

Radiologists don’t need more AI products. They need AI products designed by people who understand what it feels like to read 80 cases before lunch with a queue that never shrinks. That is the approach AI Bharata has taken with MYAIRA — building tools that fit the radiologist’s workflow rather than disrupting it.

That means fewer alerts, not more. Fewer clicks, not more. Fewer logins, fewer windows, fewer interruptions. It means AI that fits into the radiologist’s workflow like a well-designed instrument — present when needed, quiet when not, and never the source of the problem it was built to solve.

The question for every radiology department evaluating AI tools is no longer “Does this AI detect pathology accurately?” That’s necessary but not sufficient. The question is: “Will this AI make my radiologists’ day better or worse?”

If the answer isn’t clearly, demonstrably better — walk away.

Tired of AI that adds to your workload instead of reducing it? Try MYAIRA AI free — 50 analyses per month, sub-3-second results, zero added friction. Built for radiologists who need relief, not another dashboard.

Need to simplify scan sharing? Get started with Medixshare — one-click sharing that eliminates the logistics burden. Free, instant, and HIPAA-compliant.