Does Your Radiology AI Have a Bias Problem? What Patients and Providers Should Know

There is a question that most radiology AI vendors would prefer you not ask: does this algorithm perform equally well for all patients?

The honest answer, based on a growing body of peer-reviewed research, is no. Not yet. And in some cases, not even close.

Studies published in Science Advances and Nature Medicine have demonstrated that AI models trained on medical imaging data consistently underperform for certain demographic groups — particularly Black patients, women, and patients from lower socioeconomic backgrounds. The performance gaps are not trivial. They are clinically significant, and they have the potential to widen the very health disparities that equitable healthcare is supposed to narrow.

This is not an argument against AI in radiology. It is an argument for demanding transparency, validation, and accountability from every AI system that touches patient care.

The evidence is accumulating

The research on AI bias in medical imaging has moved beyond theoretical concern. Multiple high-impact studies have documented measurable performance disparities:

Chest X-ray models show racial performance gaps. A 2024 study in Science Advances analyzed commercial and academic chest X-ray AI models across demographically diverse datasets. The finding: models that achieved 94%+ sensitivity overall dropped to 85-88% for Black patients on several pathologies, including pneumonia and pleural effusion. The models were not explicitly trained on race — the bias emerged from structural imbalances in training data that correlated with demographic characteristics.

Underdiagnosis follows a pattern. A Nature Medicine analysis examined AI diagnostic performance across demographic subgroups and found a consistent pattern: models underdiagnose conditions in populations that were underrepresented in training datasets. Women were underdiagnosed for certain cardiac conditions. Patients from lower-income zip codes — a proxy for facilities with older imaging equipment producing lower-quality scans — had higher false-negative rates.

The training data problem is structural. Most large-scale medical imaging datasets originate from a small number of academic medical centers, predominantly in the United States and Western Europe. These institutions serve patient populations that do not reflect global or even national demographics. When a model learns to identify pneumothorax primarily from scans of white male patients at three Boston hospitals, its performance on scans from a community hospital in rural Mississippi or a clinic in Mumbai is an open question — and the data suggests the answer is “worse.”

Self-reported race is a crude proxy. Several studies have shown that AI models can predict patient race from chest X-rays alone with over 90% accuracy — even when radiologists cannot. This means models may be using racial features as shortcuts in ways that researchers do not fully understand, and these shortcuts can systematically advantage or disadvantage specific populations.

Why this matters clinically

AI bias in radiology is not an abstract fairness concern. It is a patient safety issue.

When an AI second reader is less likely to flag a finding in a Black patient’s chest X-ray, that patient is more likely to have a delayed diagnosis. When a model underperforms on scans from lower-resource facilities with older equipment, the patients who already face the most barriers to care receive the least reliable AI assistance.

The cruelest irony is that AI is often marketed as a tool to democratize access to diagnostic expertise — to bring specialist-level analysis to underserved and rural communities that lack adequate radiologist coverage. If the AI performs worst precisely where it is needed most, it doesn’t bridge the gap. It reinforces it.

Consider the clinical scenarios:

-

A community health center in a majority-Black neighborhood adopts AI-assisted chest X-ray reading to compensate for limited radiologist availability. The AI misses an early-stage consolidation at a rate 8% higher than it would for the same finding in a patient from the training distribution. The patient is discharged. The pneumonia progresses.

-

A rural imaging center serving a predominantly low-income population uses AI triage to prioritize urgent findings. The model was validated on high-quality scans from urban academic centers. On the center’s older equipment, noise and artifact increase false-negative rates. The critical finding that should have been escalated sits in the queue.

These are not hypotheticals. They are the predictable consequences of deploying AI systems without rigorous demographic validation.

The regulatory landscape is catching up

Regulators have noticed.

The EU AI Act, which enters full enforcement in August 2026, classifies medical AI systems as “high-risk” and imposes specific requirements:

- Bias documentation. Developers must assess and document AI system performance across relevant demographic subgroups before deployment.

- Training data transparency. The composition, source, and representativeness of training datasets must be disclosed.

- Post-market monitoring. Ongoing surveillance must track whether performance disparities emerge or widen after deployment.

- Conformity assessment. High-risk AI systems must undergo assessment by a notified body before receiving CE marking for the EU market.

The EU AI Act is the most comprehensive AI regulation globally, but it is not alone. The FDA has issued guidance on diversity in clinical validation for AI/ML-based medical devices. The WHO has published ethics guidelines that explicitly address bias in health AI. And the OSTP Blueprint for an AI Bill of Rights, while non-binding, signals the policy direction in the United States.

For hospitals and imaging centers, the message is clear: the regulatory environment is moving toward mandatory bias assessment. Vendors that cannot demonstrate equitable performance will face market access barriers. And healthcare organizations that deploy biased AI may face both regulatory and liability exposure.

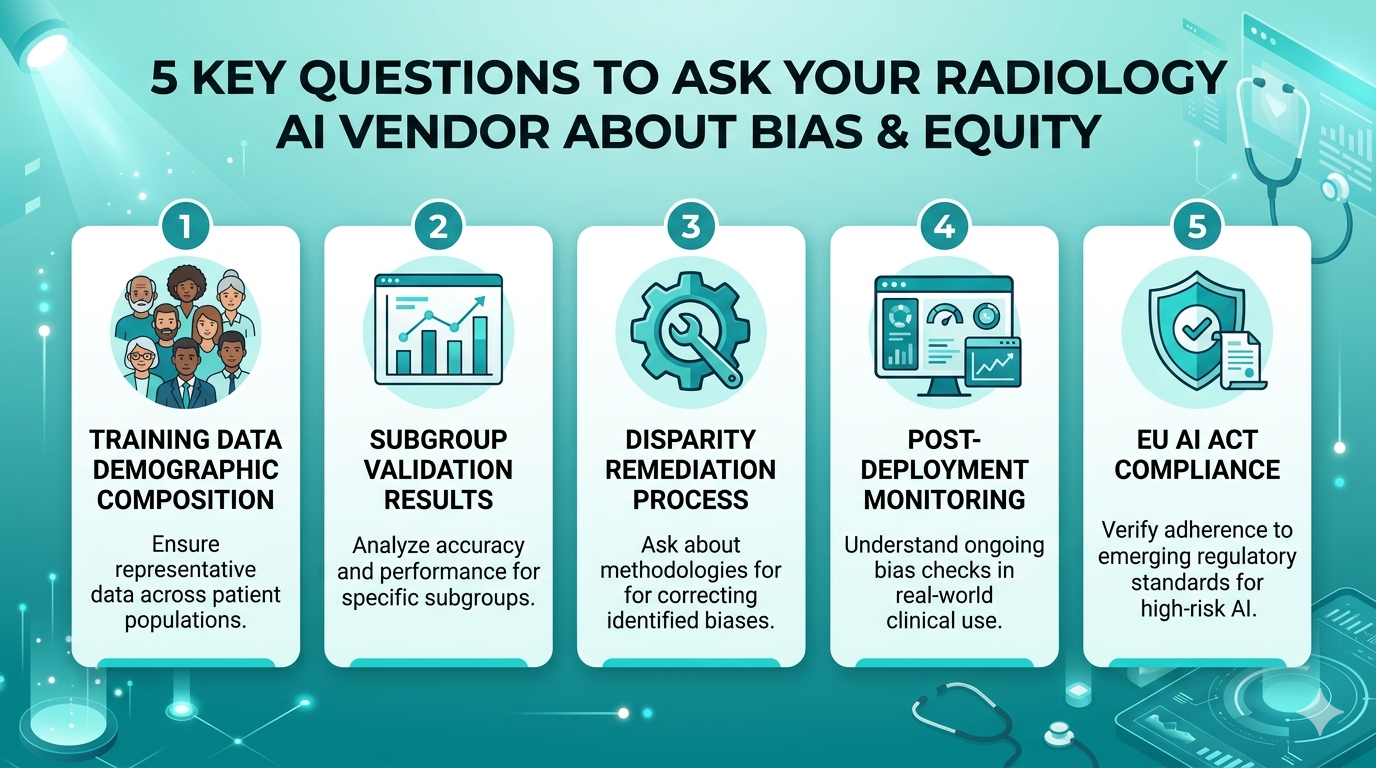

Five questions to ask your AI vendor

If you are evaluating or currently using a radiology AI product, these are the questions that matter:

1. What is the demographic composition of your training data?

A vendor that cannot answer this question — or will not — is a red flag. You need to know: how many patients from each demographic group are represented? What institutions contributed data? Is the dataset geographically diverse? If the model was trained on 90% white male patients from three academic hospitals, its generalizability is an assumption, not a fact.

2. Have you validated performance across demographic subgroups?

Overall accuracy numbers (sensitivity, specificity, AUC) are necessary but insufficient. Ask for subgroup analysis: performance by race, sex, age group, and imaging equipment type. If the vendor has not done this analysis, they cannot tell you whether their model is biased — because they have not checked.

3. How do you handle performance disparities when you find them?

Finding disparities is the first step. What matters is what happens next. Does the vendor retrain on more representative data? Apply algorithmic fairness techniques? Disclose the disparities to customers? Or bury them in footnotes? The answer tells you about the company’s values, not just its technology.

4. What post-deployment monitoring is in place?

A model validated on a diverse dataset today can drift tomorrow as patient populations, equipment, and clinical practices change. Ask whether the vendor monitors performance by subgroup in production — not just at the time of FDA clearance or CE marking.

5. Is your AI compliant with the EU AI Act’s bias documentation requirements?

Even if you are not in the EU, this question is a useful proxy for maturity. A vendor that has prepared for the world’s most rigorous AI regulation has done the work that matters. A vendor that has not is either behind or hoping the requirements won’t apply to them.

What equitable AI should look like

Bias in AI is not an unsolvable problem. It is an engineering and governance challenge that requires deliberate investment. Here is what responsible development looks like:

Diverse training data by design. Not as an afterthought or a marketing claim, but as a core dataset requirement. This means sourcing imaging data from multiple geographies, facility types, equipment generations, and patient demographics. It means actively seeking out underrepresented populations rather than relying on convenience samples from academic partners.

Subgroup validation as standard practice. Every model release should include published performance metrics across demographic subgroups. This should be as standard as reporting overall sensitivity and specificity. If a model performs 6% worse for Black patients, that should be in the documentation — and it should trigger remediation before deployment.

Continuous monitoring in production. Training data is a snapshot. Real-world performance is a moving target. Equitable AI requires ongoing monitoring that detects performance drift across subgroups and triggers retraining when disparities emerge.

Transparency over marketing. No AI model is perfect for every patient. Honest vendors disclose limitations. They publish validation studies. They welcome independent evaluation. They do not hide behind aggregate accuracy numbers that obscure subgroup disparities.

MYAIRA’s commitment to equitable performance

We are not going to claim that MYAIRA has solved AI bias. No one has. But we are committed to transparency about where we are, where the gaps exist, and what we are doing about them.

Training data diversity. MYAIRA’s models are trained on imaging data from multiple countries, facility types, and equipment generations. We actively expand dataset representation for demographics where we identify performance gaps.

Subgroup validation. We test model performance across demographic categories including race, sex, age, and equipment type. When we find disparities, we disclose them and prioritize them for remediation in the next model iteration.

Compliance readiness. MYAIRA’s documentation and validation processes are aligned with the EU AI Act’s requirements for high-risk AI systems, including bias documentation, training data transparency, and post-market monitoring.

Open to scrutiny. We welcome independent validation of our models. If an external study finds a performance gap we missed, we want to know — because our patients’ health depends on it.

At AI Bharata, we see this not as a competitive advantage but as a baseline obligation. Every AI vendor in medical imaging should be doing this. The fact that many are not is a problem the industry needs to confront.

What patients should know

If you are a patient, you probably did not know that the AI analyzing your scan might perform differently based on your race, sex, or the type of machine that took your images. Most patients don’t. Most physicians don’t think to ask.

Here is what you can do:

- Ask whether AI was used in your imaging analysis. You have the right to know.

- Ask how the AI was validated. Your physician may not know the details, but the question prompts the conversation.

- Advocate for transparency. Support policies that require AI vendors to disclose performance across demographic groups.

- Choose platforms that prioritize equity. When sharing your scans with specialists via platforms like Medixshare by AI Bharata, the analysis should be as reliable for you as for any other patient.

The promise of AI in radiology — faster reads, broader access, better second reads — is real. But that promise only holds if the technology works equally well for everyone. Not just for the patients who look like the training data.

Want AI analysis you can trust? Try MYAIRA AI free — built with diverse training data, validated across demographic subgroups, and committed to equitable performance. 50 analyses per month, no contract required.

Need to share scans securely? Get started with Medixshare — instant, encrypted, HIPAA-compliant image sharing that works for every patient, everywhere.